最近一直在为深度学习模型训练而苦恼,NVIDIA GTX 960、2G显存,即使是跑迁移学习的模型,也慢的要死,训练过程中电脑还基本上动不了。曾考虑升级显卡,但当时买的是品牌机,可扩展性很差,必须要买一台新的主机。到京东上瞧了一下,RTX 2080 TI显卡的游戏主机,差不多需要两万,更别说那些支持多路显卡的深度学习主机。无奈之下,只好尝试一下谷歌的GPU云计算平台。谷歌的GPU云计算平台并不是新鲜事物,我在去年就写过关于它的两篇文章:

在那篇文章中,我写到了Google Colab的不足:

Google Colab最大的不足就是使用虚拟机,这意味着什么呢?

这意味着我们自行安装的库,比如Keras,在虚拟机重启之后,就会被复原。为了能够持久保存数据,我们可以借助Google Drive。还记得我们之前为了挂载Google Drive所做的操作吗?这也同样意味着重启之后,要使用Google Drive,又要把上面的步骤执行一遍。更糟糕的是,不仅仅是虚拟机重启会这样,在Google Colab的会话断掉之后也会这样,而Google Colab的会话最多能够持续12小时。

然而,经过这次的试用,感觉上述缺点还是可以克服的:

- 在Google Colab上安装软件特别快,毕竟主机运行在国外,所以不管是pip安装python软件包,还是用apt安装ubuntu安装包,都是飞快。只要我们把软件安装的命令保存下来,下次再运行就是点点鼠标的事情。

- Google提供了非常简单的脚本来挂载Google Drive,虽然仍然需要授权过程,但过程简化了许多。而且经过这几天的试用,即使重启runtime,也不需要重新挂载。

- Google Colab的硬件经过大幅升级,加上我们最多的是进行迁移学习的模型训练,这个训练量比起从头训练要小的多,也就是说我们通常并不需要在Google Colab上训练超过12小时。

在最新的Google Colab中,加强了和github的集成,我们编写的Jupyter Notebook可以非常方便的同步到github。

下面我就以 识狗君 这个小应用 (请参考:github.com/mogoweb/AID…) 说说如何在Google Colab上进行深度学习模型的训练。在这篇文章中你将学会:

- 在Google Colab中使用Tensorflow 2.0 alpha

- 挂载Google drive,并下载stanford 狗类别数据集到Google drive

- 使用tensorflow dataset API加载图片集

- 使用Tensorflow keras进行迁移学习,训练狗狗分类模型

如果你还没有使用过Google Colab,请参阅前面两篇文章。

安装tensorflow 2.0 alpha

!pip install tensorflow-gpu==2.0.0-alpha0

原来runtime的tensorflow版本是1.13,升级后根据提示,点击 RESTART RUNTIME 按钮,重启运行时。

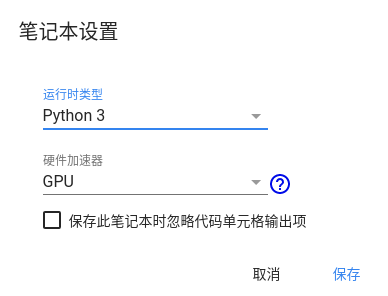

在顶部的菜单选择 代码执行程序 ⇨ 更改运行时类型,在笔记本设置 ⇨ 硬件加速器,选择GPU。这里还有一个TPU的选项,但是尝试后,发现并没有什么效果,后续再研究。

可以检验一下版本是否正确,GPU是否启用:

import tensorflow as tf

from tensorflow.python.client import device_lib

print(tf.__version__)

tf.test.is_gpu_available()

tf.test.gpu_device_name()

device_lib.list_local_devices()

输出如下:

2.0.0-alpha0

True

'/device:GPU:0'

[name: "/device:CPU:0"

device_type: "CPU"

memory_limit: 268435456

locality {

}

incarnation: 5989980768649980945, name: "/device:XLA_GPU:0"

device_type: "XLA_GPU"

memory_limit: 17179869184

locality {

}

incarnation: 17264748630327588387

physical_device_desc: "device: XLA_GPU device", name: "/device:XLA_CPU:0"

device_type: "XLA_CPU"

memory_limit: 17179869184

locality {

}

incarnation: 6451752053688610824

physical_device_desc: "device: XLA_CPU device", name: "/device:GPU:0"

device_type: "GPU"

memory_limit: 14892338381

locality {

bus_id: 1

links {

}

}

incarnation: 17826891841425573733

physical_device_desc: "device: 0, name: Tesla T4, pci bus id: 0000:00:04.0, compute capability: 7.5"]

可以看到,GPU用的是Tesla T4,这可是相当牛逼的显卡,有2560个CUDA核心,集成320个Tensor Core核心,FP32浮点性能8.1TFLOPS,INT4浮点性能最高260TFLOPS。

你还可以查看CPU和内存信息:

!cat /proc/meminfo

!cat /proc/cpuinfo

挂载Google drive,并下载数据集

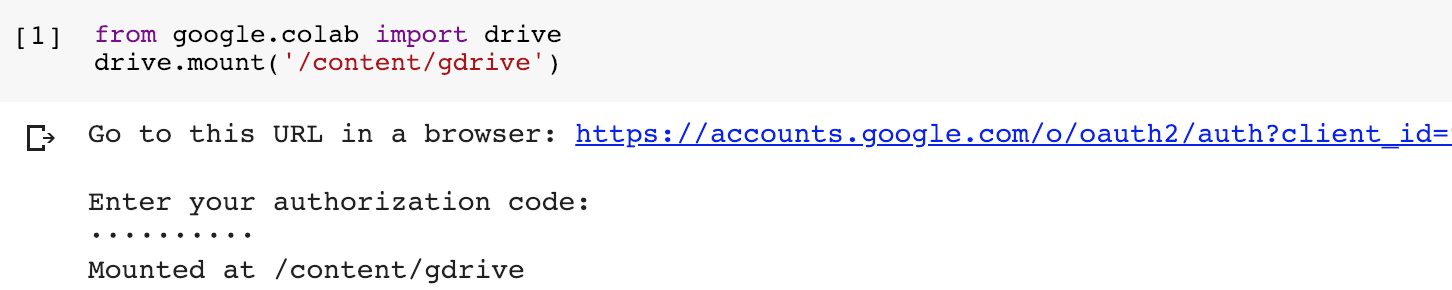

from google.colab import drive

drive.mount('/content/gdrive')

运行后会出现一个链接,点击链接,进行授权,可以得到授权码,粘帖到框框中,然后回车,就可以完成挂载,是不是很方便?

挂载之后,Google云端硬盘的内容就位于 /content/gdrive/My Drive/ 下,我在Colab Notebooks下建立了一个AIDog的目录作为项目文件夹,将当前目录切换到该文件夹,方便后续的操作。

import os

project_path = '/content/gdrive/My Drive/Colab Notebooks/AIDog' #change dir to your project folder

os.chdir(project_path) #change dir

现在当前目录就是 /content/gdrive/My Drive/Colab Notebooks/AIDog ,接下来下载并解压狗狗数据集:

!wget http://vision.stanford.edu/aditya86/ImageNetDogs/images.tar

!tar xvf images.tar

图片解压到Images文件夹,我们可以使用ls命令看看是否成功:

!ls ./Images

使用tensorflow dataset API

图片文件按照类别分目录组织,我们可以手动将数据集划分为训练数据集和验证数据集,因为图片数量比较多,我们可以选择10%作为验证数据集:

images_root = pathlib.Path(FLAGS.image_dir)

all_image_paths = list(images_root.glob("*/*"))

all_image_paths = [str(path) for path in all_image_paths]

random.shuffle(all_image_paths)

images_count = len(all_image_paths)

print(images_count)

split = int(0.9 * images_count)

train_images = all_image_paths[:split]

validate_images = all_image_paths[split:]

label_names = sorted(item.name for item in images_root.glob("*/") if item.is_dir())

label_to_index = dict((name, index) for index, name in enumerate(label_names))

train_image_labels = [label_to_index[pathlib.Path(path).parent.name] for path in train_images]

validate_image_labels = [label_to_index[pathlib.Path(path).parent.name] for path in validate_images]

train_path_ds = tf.data.Dataset.from_tensor_slices(train_images)

train_image_ds = train_path_ds.map(load_and_preprocess_image, num_parallel_calls=AUTOTUNE)

train_label_ds = tf.data.Dataset.from_tensor_slices(tf.cast(train_image_labels, tf.int64))

train_image_label_ds = tf.data.Dataset.zip((train_image_ds, train_label_ds))

validate_path_ds = tf.data.Dataset.from_tensor_slices(validate_images)

validate_image_ds = validate_path_ds.map(load_and_preprocess_image, num_parallel_calls=AUTOTUNE)

validate_label_ds = tf.data.Dataset.from_tensor_slices(tf.cast(validate_image_labels, tf.int64))

validate_image_label_ds = tf.data.Dataset.zip((validate_image_ds, validate_label_ds))

model = build_model(len(label_names))

num_train = len(train_images)

num_val = len(validate_images)

steps_per_epoch = round(num_train) // BATCH_SIZE

validation_steps = round(num_val) // BATCH_SIZE

其中加载和预处理图片的代码如下:

def preprocess_image(image):

image = tf.image.decode_jpeg(image, channels=3)

image = tf.image.resize(image, [299, 299])

image /= 255.0 # normalize to [0,1] range

# normalized to the[-1, 1] range

image = 2 * image - 1

return image

def load_and_preprocess_image(path):

image = tf.io.read_file(path)

return preprocess_image(image)

使用tensorflow keras框架进行迁移学习

我们可以在inception v3的基础上进行迁移学习,保留除输出层之外所有层的结构,然后增加一个softmax层:

def build_model(num_classes):

# Create the base model from the pre-trained model Inception V3

base_model = keras.applications.InceptionV3(input_shape=IMG_SHAPE,

# We cannot use the top classification layer of the pre-trained model as it contains 1000 classes.

# It also restricts our input dimensions to that which this model is trained on (default: 299x299)

include_top=False,

weights='imagenet')

base_model.trainable = False

# Using Sequential API to stack up the layers

model = keras.Sequential([

base_model,

keras.layers.GlobalAveragePooling2D(),

keras.layers.Dense(num_classes,

activation='softmax')

])

# Compile the model to configure training parameters

model.compile(optimizer='adam',

loss='sparse_categorical_crossentropy',

metrics=['accuracy'])

return model

接下来喂入dataset,进行训练,需要注意的是,Google的官方文档上的示例对ds做了一个shuffle的操作,但在实际运行中,这个操作特别占用内存,其实在前面的代码中,我们已经对Images文件的加载做了shuffle处理,所以这里其实不用额外做一次shuffle:

model = build_model(len(label_names))

ds = train_image_label_ds

ds = ds.repeat()

ds = ds.batch(BATCH_SIZE)

# `prefetch` lets the dataset fetch batches, in the background while the model is training.

ds = ds.prefetch(buffer_size=AUTOTUNE)

model.fit(ds,

epochs=FLAGS.epochs,

steps_per_epoch=steps_per_epoch,

validation_data=validate_image_label_ds.repeat().batch(BATCH_SIZE),

validation_steps=validation_steps,

callbacks=[tensorboard_callback, model_checkpoint_callback])

在Google Colab上训练,一个epoch只需要一分多钟,经过实验,差不多10个epoch,验证数据集的精度就可以趋于稳定,所以整个过程大约十几分钟,远远低于系统限制的十二个小时。

W0513 11:13:28.577609 139977708910464 training_utils.py:1353] Expected a shuffled dataset but input dataset `x` is not shuffled. Please invoke `shuffle()` on input dataset.

Epoch 1/10

358/358 [==============================] - 80s 224ms/step - loss: 1.2201 - accuracy: 0.7302 - val_loss: 0.4699 - val_accuracy: 0.8678

Epoch 2/10

358/358 [==============================] - 74s 206ms/step - loss: 0.4624 - accuracy: 0.8692 - val_loss: 0.4347 - val_accuracy: 0.8686

Epoch 3/10

358/358 [==============================] - 75s 209ms/step - loss: 0.3582 - accuracy: 0.8939 - val_loss: 0.4164 - val_accuracy: 0.8726

Epoch 4/10

358/358 [==============================] - 75s 210ms/step - loss: 0.2795 - accuracy: 0.9175 - val_loss: 0.4175 - val_accuracy: 0.8750

Epoch 5/10

358/358 [==============================] - 76s 213ms/step - loss: 0.2275 - accuracy: 0.9357 - val_loss: 0.4174 - val_accuracy: 0.8726

Epoch 6/10

358/358 [==============================] - 75s 210ms/step - loss: 0.1914 - accuracy: 0.9478 - val_loss: 0.4227 - val_accuracy: 0.8702

Epoch 7/10

358/358 [==============================] - 75s 209ms/step - loss: 0.1576 - accuracy: 0.9584 - val_loss: 0.4415 - val_accuracy: 0.8654

Epoch 8/10

358/358 [==============================] - 75s 209ms/step - loss: 0.1368 - accuracy: 0.9639 - val_loss: 0.4364 - val_accuracy: 0.8686

Epoch 9/10

358/358 [==============================] - 76s 211ms/step - loss: 0.1162 - accuracy: 0.9729 - val_loss: 0.4401 - val_accuracy: 0.8686

Epoch 10/10

358/358 [==============================] - 75s 211ms/step - loss: 0.1001 - accuracy: 0.9768 - val_loss: 0.4574 - val_accuracy: 0.8678

模型训练完毕之后,可以输出为saved_model格式,方便使用tensorflow serving进行部署:

keras.experimental.export_saved_model(model, model_path)

至此,整个过程完毕,训练出的模型保存于 model_path,我们可以下载下来,用于狗狗识别。

以上完整源代码,可以访问我google云端硬盘:

colab.research.google.com/drive/1KSEk…

参考

- www.tensorflow.org/tutorials/l…

- zhuanlan.zhihu.com/p/30751039

- towardsdatascience.com/downloading…

- medium.com/@himanshura…

你还可以看